Lately, there’s been a lot of talk about context engineering. Anthropic recently released an impressive multi-agent research system, and LangChain has published some strong resources on the subject. I thought it would be useful to bring these ideas together and break down how multi-agent systems actually make use of context engineering.

In this blog post, I’ll cover:

What context engineering actually is

A case study of what Anthropic built that uses it well

The four main types of context engineering, with examples from Anthropic’s approach

What Is Context Engineering?

In short: context engineering is the practice of managing the information an LLM has access to in order to produce more better outputs.

"Context engineering is the delicate art and science of filling the context window with just the right information for the next step." — Andrej Karpathy.

If the LLM is the CPU, its context window is the RAM. It’s powerful, but limited. And just like RAM, if you overload it, performance tanks.

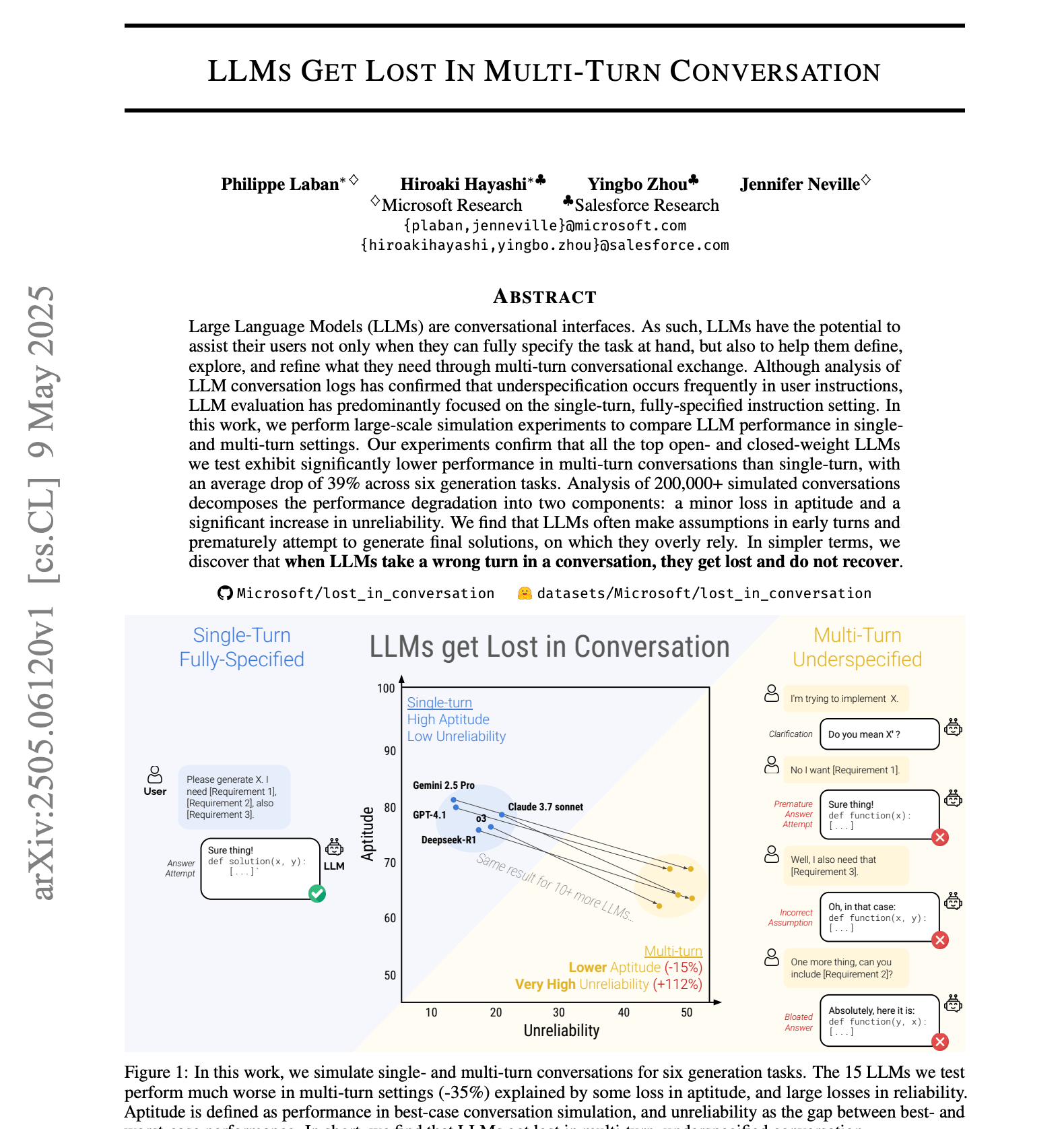

And the research proves this; Microsoft & Salesforce paper, LLMs Get Lost in Multi-Turn Conversation, found that after just one back-and-forth, model accuracy can drop by nearly 40%.

Case Study: Anthropic’s Multi-Agent Research System

Anthropic recently introduced a blog post: “How we built our multi-agent research system.” They built a great research tool that includes some excellent examples of context engineering.

And this is how it works: “When a user submits a query, the lead agent analyzes it, develops a strategy, and spawns subagents to explore different aspects simultaneously. As shown in the diagram above, the subagents act as intelligent filters by iteratively using search tools to gather information, in this case on AI agent companies in 2025, and then returning a list of companies to the lead agent so it can compile a final answer.”

For one agent, this task is task can be very broad and the context would overload quickly, causing accuracy and efficiency to be at a much lower rate.

Anthropic solved this by creating a team of cooperating agents, each applying different context engineering techniques. Instead of one agent trying to do everything, they decomposed the task across multiple specialised sub-agents, orchestrated by a lead researcher agent.

In internal testing, this system outperformed a single Claude Opus 4 agent by 90.2% on breadth-first research tasks. For example, when asked to identify S&P 500 board members, the multi-agent system succeeded while the single agent failed with slow, sequential searches.

The Four Types of Context Engineering with Examples

Now that you have more context (no pun intended), let’s go over some core examples of context engineering that were proposed by LangChain and how they apply to this system.

Type 1: Write Context

This type is about writing important details into external memory (like a database, vector store, or file system) instead of forcing everything into the LLM’s limited context window.

How Anthropic applied it:

The lead researcher agent created a detailed plan.

Then since it was too large for context, the plan was written to external memory.

Sub-agents could then access it later as needed.

This ensured continuity across hours or even days of work. Without it, long workflows would collapse once the context refreshed.

Type 2: Select Context

This type is about giving agents only the information relevant to their current step, usually via RAG.

How Anthropic applied it:

The lead agent divided the work into parts.

One sub-agent received only the instruction to “find companies.”

Another got “map industries.”

Another handled “gather product descriptions.”

By keeping each sub-agent’s context lean, they avoided the “stuff everything into context and hope for the best” trap that wastes tokens and reduces reasoning quality.

Type 3: Compress Context

When information is too long to fit in context, agents compress it into summaries, key points, or structured data.

How Anthropic applied it:

Sub-agents gathered results and summarised them before sending back to the lead researcher.

Instead of dumping 20 raw company profiles, they returned concise structured summaries.

Type 4: Isolate Context

Not all agents need access to the same information. With isolate context, each agent works with scoped memory relevant only to its role.

How Anthropic applied it:

A dedicated citation agent only tracked references and sources.

It didn’t need company details, pricing, or verticals just citation validation.

This isolation avoided clutter and contradictions, keeping each agent lean and focused.

The Outcome

By combining these four techniques: write, select, compress, and isolate, Anthropic built a much better system that could do far more than any single agent could.

I’ve observed the same pattern in our own research at Coral Protocol. Our GAIA benchmark experiments show that networks of smaller, specialized agents with strong context engineering outperform setups that rely purely on larger models.

The lesson is clear: smarter context engineering lets you build far more powerful and reliable systems than ever before.

You can find a ton of great resources that were used to create this blog below!

Watch the Full Breakdown

If you’d prefer a visual walk-through, here’s a YouTube explainer covering this topic!

Connect with me:

X/Twitter: @omni_georgio

LinkedIn: romejgeorgio

Instagram: @omni_georgio

Check out one of my other videos: